How to Turn Your Knowledge Graph Embeddings into Generative Models via Probabilistic Circuits

Published in NeurIPS 2023

Authors: Lorenzo Loconte, Nicola Di Mauro, Robert Peharz, Antonio Vergari

Project URL: https://openreview.net/forum?id=RSGNGiB1q4

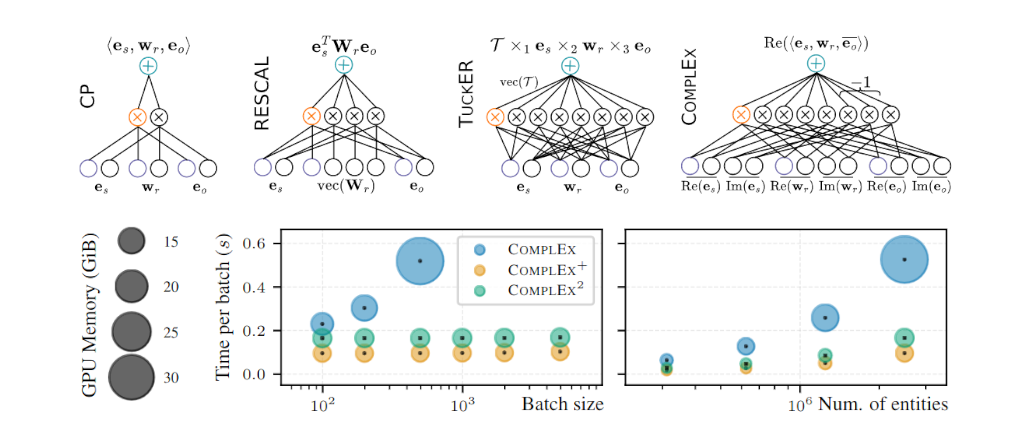

Abstract: Some of the most successful knowledge graph embedding (KGE) models for link prediction -- CP, RESCAL, TuckER, ComplEx -- can be interpreted as energy-based models. Under this perspective they are not amenable for exact maximum-likelihood estimation (MLE), sampling and struggle to integrate logical constraints. This work re-interprets the score functions of these KGEs as circuits -- constrained computational graphs allowing efficient marginalisation. Then, we design two recipes to obtain efficient generative circuit models by either restricting their activations to be non-negative or squaring their outputs. Our interpretation comes with little or no loss of performance for link prediction, while the circuits framework unlocks exact learning by MLE, efficient sampling of new triples, and guarantee that logical constraints are satisfied by design. Furthermore, our models scale more gracefully than the original KGEs on graphs with millions of entities.

Bibtex:

@inproceedings{loconte2023how,

title={How to Turn Your Knowledge Graph Embeddings into Generative Models},

author={Lorenzo Loconte and Nicola Di Mauro and Robert Peharz and Antonio Vergari},

booktitle={Thirty-seventh Conference on Neural Information Processing Systems},

year={2023},

url={https://openreview.net/forum?id=RSGNGiB1q4}}